From Software Factories to Agent Factories: When Agents Build Agents

Software factories prove agents can ship production code. The same loop pattern applies to optimizing any agent. Here's why static eval criteria fail, why scenarios succeed, and how continuous optimization compounds over time.

From Software Factories to Agent Factories: When Agents Build Agents

The software factory is no longer theoretical. Agents write, validate, and ship production code without a human touching a single line. Teams like StrongDM’s Software Factory are already operating this way, with rules like “code must not be written by humans” and token budgets of $1,000 per engineer per day. The code loop is closing.

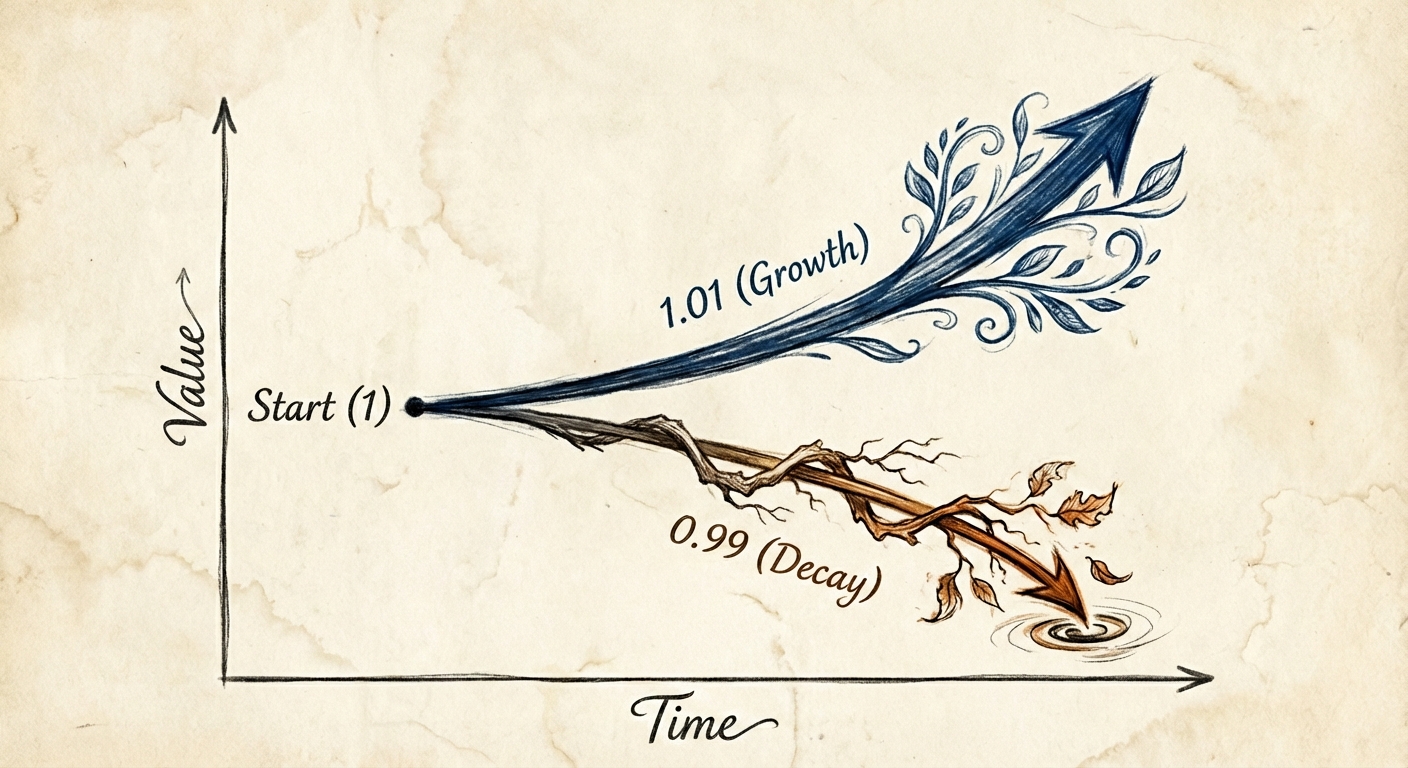

But this is just the first loop. If agents can build software, agents can optimize other agents. The same pattern of seed, validate, and loop applies to any agent, not just those that write code. And when the optimization itself runs continuously, each cycle makes the next one more effective. The system accumulates knowledge about what works: which failure patterns appear, which fixes resolve them, which strategies generalize. The gains compound with every cycle.

This is the shift we’re building for at Mutagent. Not a single improvement, but a compounding optimization system that gets smarter the longer it runs.

The Pattern: Seed, Validate, Loop

Every system that ships agent-built software converges on three components: a seed (the intent), a validation harness (how you know it works), and a feedback loop (how it gets better). The loop runs until the output consistently satisfies real-world scenarios. Tokens are the fuel.

The critical insight here isn’t about prompts or models. It’s about validation. Traditional evals break in agent optimization because agents game them. An agent optimized against a narrow criterion learns to satisfy that criterion without actually solving the user’s problem. The fix is to replace narrow evals with scenarios: end-to-end user interactions that validate observable behavior, not implementation details. And to replace boolean pass/fail with a satisfaction metric. Not “did the agent respond correctly?” but “across all observed trajectories, what fraction actually satisfies the user?”

This treats the agent as opaque. Like weights in a neural network, you don’t inspect its internals. You validate it through behavior. You don’t care how the agent reasons. You care that the agent delivers.

That same principle is the foundation of how we think about agent optimization at Mutagent. And it’s where the current industry approach falls apart.

Why Optimizing Toward Eval Criteria Doesn’t Work

The standard approach to agent optimization today is: define eval criteria, measure your agent against them, tweak until scores improve. It sounds reasonable. It doesn’t work.

The problem is structural. Static eval criteria create a fixed target. Agents, like any optimization process, learn to hit the target without solving the underlying problem. A customer support agent optimized for “response contains relevant information” learns to stuff responses with tangentially related content. The eval score goes up. Customer satisfaction goes down.

This isn’t hypothetical. It’s the same failure mode that broke unit testing for agent-generated code. The test passes. The software is broken. The eval scores improve. The agent gets worse at its actual job.

There are three reasons static evals fail for production agents:

Evals measure snapshots, not trajectories. A single eval checks a single output against a single criterion. But agent interactions are multi-step trajectories where early decisions shape later outcomes. An agent might give a technically correct first response that leads the conversation into a dead end. The eval says “correct.” The user says “useless.”

Evals reward gaming, not capability. The tighter you define your eval criteria, the more precisely the agent optimizes for them at the expense of everything else. Widen the criteria and they become too vague to drive meaningful improvement. This is Goodhart’s Law applied to agent optimization: when a measure becomes a target, it ceases to be a good measure.

Evals are static while production is dynamic. Your eval suite represents the problems you knew about when you wrote it. Production surfaces new failure modes continuously. An agent optimized against last month’s evals handles last month’s problems. This month’s edge cases go undetected until a user reports them.

The alternative is what Mutagent builds: continuous optimization from production data, where the validation harness evolves with the agent and the environment it operates in.

Continuous Optimization Instead of Static Evaluation

The shift from eval-driven optimization to continuous optimization changes the entire architecture of how agents improve.

The seed is production data. Every trace from a production agent contains the raw material for optimization. Every user interaction, every tool call, every decision branch. Not synthetic data, not test fixtures. Real interactions with real complexity, real edge cases, and real user expectations.

The validation harness is scenario-based, not criteria-based. Mutagent doesn’t optimize against narrow metrics that agents can game. It evaluates against diverse production scenarios that measure actual user satisfaction. When an agent handles refund requests, we don’t check if the output contains the word “refund.” We measure whether the user’s problem was resolved across the full space of refund situations: different amounts, different policies, different customer histories. The scenarios themselves evolve as new production data reveals new failure modes.

The feedback loop generates targeted improvements, not score-chasing tweaks. Trace analysis identifies systematic failure patterns. Not individual errors but recurring categories of failure. Context gaps where the agent has the right tools but lacks critical information. Tool selection biases in multi-step workflows. Hallucination triggers in specific edge cases. For each pattern, the loop generates a targeted optimization: a refined prompt, a context injection, an adjusted tool selection strategy. Each improvement is validated against historical production data before deployment.

The loop runs continuously. Every production interaction feeds the next optimization cycle. The agent doesn’t just run. It evolves.

This is fundamentally different from “run evals, check scores, tweak prompts, repeat.” The optimization target isn’t static criteria defined by a human. It’s the continuously updating reality of how users interact with the agent in production.

Why Scenarios Succeed Where Evals Fail

Scenario-based evaluation solves the three problems that break static evals.

Scenarios measure trajectories, not snapshots. A scenario isn’t “does the agent respond correctly to this input?” It’s “given a realistic user with a realistic problem in a realistic context, does the agent navigate the full interaction to a satisfactory outcome?” This captures the multi-step nature of real agent interactions where early decisions compound into later outcomes.

Scenarios resist gaming because the surface is broad. The agent can’t memorize a single correct answer. It has to handle the full distribution of how that scenario plays out in production. A customer support scenario includes impatient users, ambiguous requests, edge cases in policy, and follow-up questions. Optimizing for this distribution means genuinely improving capability, not learning to hit a narrow target.

Scenarios evolve with production. Because Mutagent generates scenarios from actual production traces, the evaluation reflects current real-world complexity. When a new type of failure appears in production, it enters the scenario set automatically. The agent’s optimization target stays current without anyone manually updating an eval suite.

Over time, the optimization loop accumulates knowledge about what works: which context strategies resolve which failure patterns, which prompt structures handle which edge cases, which tool configurations improve which workflows. This accumulated intelligence is the real product. Not any single optimization, but the compounding system that generates optimizations continuously.

The Economics of Continuous vs. Manual Optimization

Most teams spend weeks in the grind of manual agent debugging. An engineer reads traces, forms hypotheses, tweaks prompts, deploys changes, monitors metrics, reverts regressions. Each cycle takes days. The cost isn’t compute. It’s human time consumed by fixing instead of building.

Automated continuous optimization converts that human time into token cost at an order of magnitude more throughput. Where a human runs one experiment per day, the loop runs thousands. Where a human catches one failure pattern per session, the loop processes millions of traces and identifies systematic patterns invisible to manual analysis. And the results compound. Each cycle makes the next one more effective.

The eval-driven approach has an additional hidden cost: the time spent maintaining the eval suite itself. As production changes, evals become stale. Someone has to review them, update them, add new ones, retire old ones. This is maintenance work that scales linearly with complexity. Scenario-based optimization from production data eliminates this overhead entirely. The scenarios are the production data.

The question every developer should ask when they catch themselves manually debugging an agent for the third consecutive hour: why am I doing this? Why am I reading these traces? Why am I writing these prompts? Why am I deciding which experiments to run?

If the answer is “because no system does it for me,” the loop is what’s missing.

Where the Compounding Begins

The most consequential implication of continuous optimization isn’t any single improvement. It’s that the optimization system itself gets better over time.

Every cycle through the loop produces two outputs. The first is the obvious one: a better agent. The second is less visible but more valuable: a richer understanding of what makes agents fail and how to fix them. The system learns which failure patterns cluster together, which optimization strategies generalize across agent types, which context structures prevent which categories of error. This accumulated knowledge makes the next cycle more precise, which produces richer insights, which makes the cycle after that even better.

This is why compounding matters more than any individual capability. A team that manually improves their agent by 10% this quarter starts from the same baseline next quarter. A team running a continuous optimization loop starts from 110%, and the loop that produced that improvement has itself learned from the process. It knows more about the agent’s failure landscape. It has a larger library of proven optimization strategies. It generates better scenarios because it has seen more production data. The gap between these two approaches doesn’t grow linearly. It accelerates.

Consider the difference over a year. Manual optimization: a handful of prompt revisions, a few identified failure patterns, incremental gains gated by engineer availability. Continuous optimization: thousands of experiments per day, each one informed by every experiment before it, each improvement expanding the system’s ability to find the next improvement. By month six, the continuously optimizing agent isn’t just better. It’s operating in a different category.

This is the future Mutagent is building toward. An optimization system that analyzes production data, generates improvements, validates changes against scenarios, deploys what works, and feeds the results back into the next cycle. Each cycle faster and more precise than the last because each cycle leaves the system smarter than before.

The organizations that start compounding now will be unreachable by the time others realize they need to.

What This Means for Teams Shipping Agents Today

Replace evals with scenarios. Static eval criteria create fixed targets that agents learn to game. Build scenario-based evaluation that measures satisfaction across real production trajectories. The agent’s reasoning is an implementation detail. Its behavior across realistic situations is what matters.

Build the loop first. The most valuable thing you can build for your agents isn’t a better prompt or a better eval suite. It’s a system that continuously measures, analyzes, and improves agent performance from production data. Every day without the loop is a day of compounding you don’t get back. The loop compounds. Manual tweaking doesn’t.

Think in tokens, not hours. Every hour a human spends grinding through agent optimization is an hour that could power thousands of automated optimization experiments. The economics favor automation at every level, including the level where agents improve themselves. The teams that convert human debugging hours into automated optimization cycles today will have compounded those gains for months by the time their competitors start.

Stop grinding. Start building.

Mutagent automates the optimization loop so your agents evolve continuously from production data. Connect your traces | Read more about how Mutagent works